By Dimitris Mavrakis, Senior Research Director, ABI Research April 3, 2026

Collected at: https://www.rcrwireless.com/20260403/analyst-angle/ai-grid-telecoms-race-abi

Telcos are exploring NVIDIA’s AI grid, but edge GPU deployment lacks a strong latency or cost case today; only physical AI use cases justify it, with gradual rollout expected toward future 6G networks.

The diffusion of AI in telco networks continues with NVIDIA’s announcement about an AI grid concept, as well as recent telco announcements about adoption of AI infrastructure, including from T-Mobile US, Comcast, SoftBank, and others. For mission-critical applications, token generation will likely need to take place at the far edge of the network to take advantage of low latency, particularly for physical AI applications like robotics, connected cars, and other near-real-time use cases.

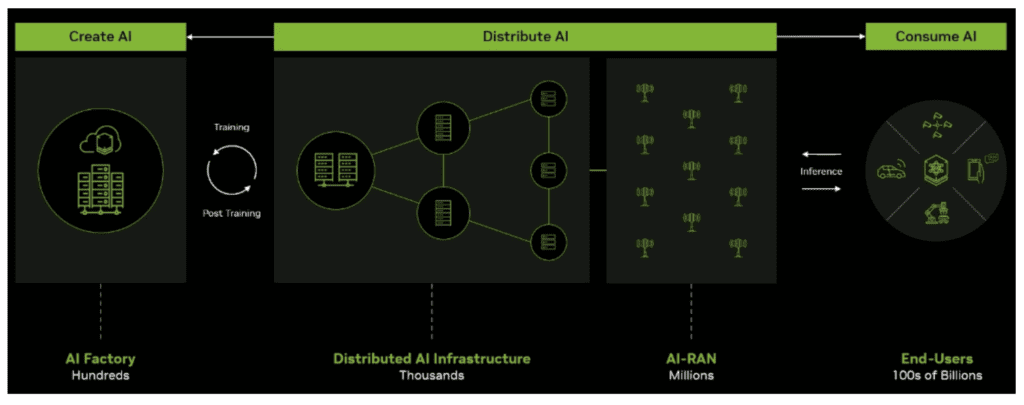

T-Mobile has argued that physical AI begins with intelligent networks and NVIDIA has said that telcos are in an ideal position to become a key component of this new AI grid concept. Naturally, the company that will benefit the most from its realization is NVIDIA, which would provide both hardware and software. The graphic below is NVIDIA’s interpretation of the AI grid, which is purportedly already being built by telcos.

The case for lower latency is weak

A strong, if not the strongest, argument for deploying GPUs at the near/far-edge of the network is latency, which is required for applications that require near ‘real-time’ actuation and control. The example shared at GTC showed a chatbot agent with a 2,000ms round-trip, versus the same with a 400ms latency – which, indeed, makes a significant difference to user experience.

But network latency does not typically contribute much to the most important ‘time-to-first-token’ (TTFT) metric. In a typical example, network ‘round-trip time’ (RTT) latency may be as high as 100 ms, but other latency parameters will apply, regardless of the position of the inference server, including DNS resolution and tunnel establishment. For a medium prompt size of 1,000 tokens, a typical prefill may take 160 ms, whereas the decoding phase may take several seconds to complete.

Moving the inference server closer to the end user will not likely make any material difference to the user experience. It is simply not financially viable today or in the next two to three years to deploy servers throughout a country to reduce this latency further. Deploying servers at the cell site is complicated even further as the addressable subscriber base and geographical area connected through a single site would restrict the business case even further, except for very-high value and highly-critical use cases.

The discussion is flipped entirely if the use case becomes physical AI, with the latency budget coming down to by around 10 ms. In these cases, cloud inference becomes untenable and inference needs to move to the edge of the network: a 100 ms latency for an autonomous car moving at 100 km/h would mean the car would be blind for 2.8 meters. The same could be said for video surveillance, drone delivery robots, and a host of other applications.

But the question is, what is the cost of a distributed AI grid – specifically, say, for T-Mobile in the US?

Quantifying TCO for GPU-enabled RAN

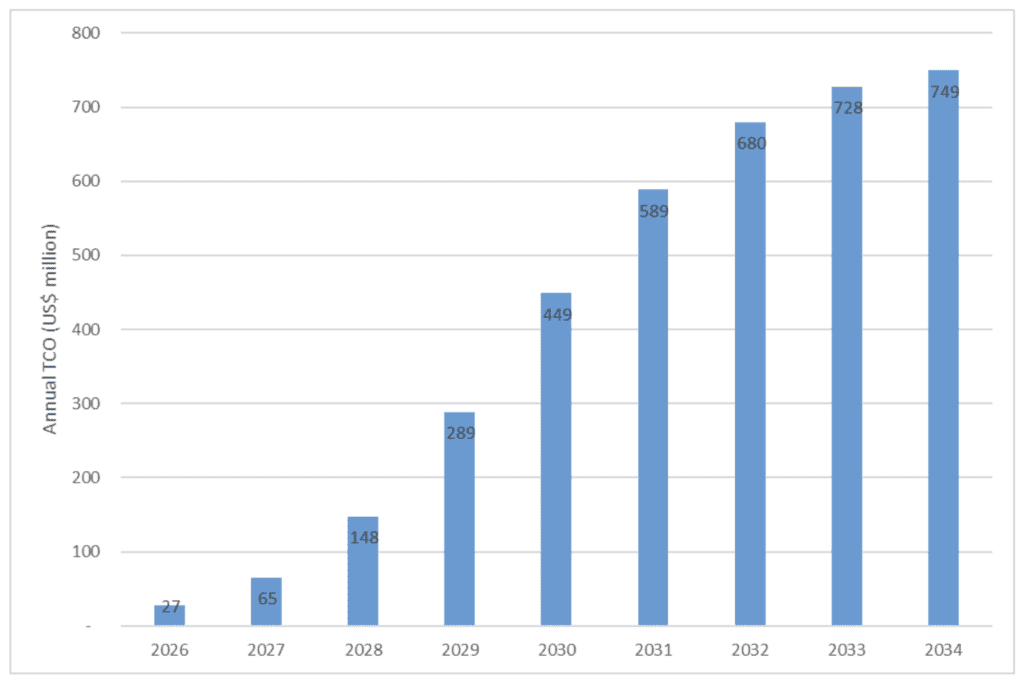

T-Mobile US said at GTC that kinetic tokens will be a massive opportunity for telcos globally and for them to take advantage of their real estate, they will need AI-RAN systems, and GPUs in their network. If we assume that T-Mobile US operates around 13,000 rooftop cell sites in the US, and that it begins retrofitting them with AI-RAN servers (in this case an NVIDIA ARC-1 server, costing $60,000, to power three cells), and that it completes this deployment with 100% rooftop GPU coverage in 2035 – then the total cumulative cost will be $3.7 billion, including deployment, cooling, and other ancillary costs.

Chart 1, below, illustrates the annual TCO for this example deployment.

Spread across nine years, the investment to deploy an AI grid becomes more manageable, assuming revenue scales accordingly. Moreover, the $3.7 billion estimate is hardly a blip in the NVIDIA universe, but telcos and their investors will need a strong business case to spend this amount – especially when this is in the order of a new-generation radio network being deployed.

Currently, there is no justification for deploying GPUs at the cell site, which means that AI inference will likely start to be centrally deployed and then gradually fan out to cell sites. Indeed, AI inference servers will probably be deployed in core network locations that are typically fewer than 10 across a country and then gradually spread out when the need arises for lower latency.

Many use cases, including video surveillance, autonomous driving, last-mile delivery robotics, smart glasses, and AR/VR applications represent the scenarios where edge inference is not optional, but architecturally necessary. Initial deployments of the AI grid will likely future-proof the telco network to enable incremental upgrades as the adoption of these use cases widens. Telcos that understand this the earliest will set the foundation for 6G and will solidify their position in the AI super cycle.

Leave a Reply