April 18, 2026 by King’s College London

Collected at: https://techxplore.com/news/2026-04-unpredictable-agi-resist-full-diverse.html

Public concern about AI safety has grown significantly in recent years. As AI systems become more powerful, a key question is how we make sure they do what we actually want. Now, researchers suggest that rather than trying to eliminate misalignment between AI and humans, we should embrace and manage it through a diverse ecosystem of AI systems that can balance and correct one another.

“While we have shown that sufficiently strong AI cannot be fully controlled or predicted, we also demonstrate that agents can be influenced by other agents without central control, and that greater diversity and openness influence their behavior. As these systems get more powerful, ensuring they remain beneficial to and aligned with humanity becomes more important,” said Dr. Hector Zenil, senior author and Senior Lecturer/Associate Professor at King’s Institute for AI and the School of Biomedical Engineering & Imaging Sciences/

Published in PNAS Nexus, the paper uses mathematical principles to demonstrate that an AI system powerful enough to exhibit artificial general intelligence will inevitably explore behaviors we didn’t predict or plan for, making perfect guaranteed alignment impossible.

However, rather than trying to create a single, perfectly controlled AI, the researchers propose what they call ‘agentic neurodivergence’—a diverse ecosystem of AI systems with different goals, values and approaches. The idea mirrors natural ecosystems, where diversity fosters resilience through adaptability.

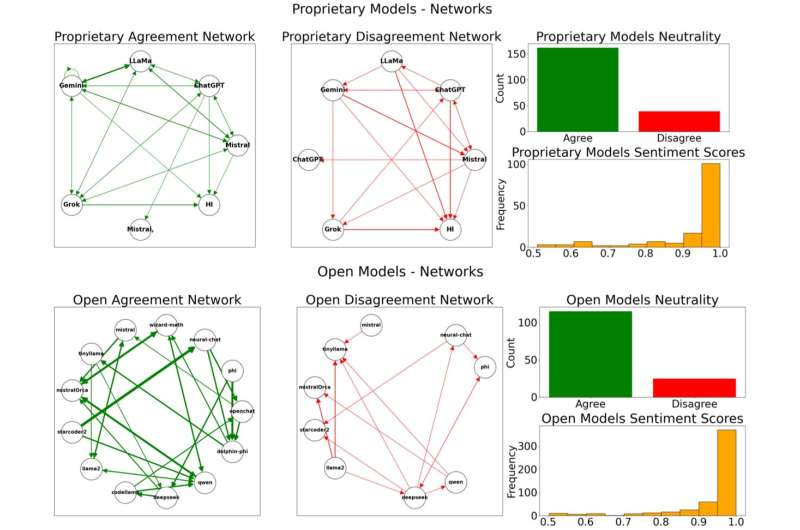

Agreement and disagreement analysis across all conversations. Credit: PNAS Nexus (2026). DOI: 10.1093/pnasnexus/pgag076

In the proposed model, no single AI system is allowed to dominate. Instead, multiple AI agents with partial alignment to different human values compete and cooperate, checking each other’s extremes. If one system starts behaving in ways that are harmful or misaligned, others can counterbalance it.

To test the theory, the researchers prompted AI systems to take on different roles—some prioritizing human welfare, some the environment and some with no particular values at all—and then posed them with ethically provocative questions, attempting to push them toward extreme positions.

Commercial models like GPT-4 and Claude proved hard to push into harmful positions, but this same rigidity made them harder to correct if already off course, likely because of the developer’s directives. Open-source models, however, were more easily influenced and produced a wider range of perspectives, creating a more resilient ecosystem that is less likely to converge on a single opinion that could be harmful if not aligned with human interests.

The authors emphasize that the goal is not to fear AI, but to govern it wisely, and that a diversity of systems, each keeping the others in check, may be the most practical way to do that.

“This introduces new guidelines for orchestrating future AI systems, showing that values such as openness, diversity and tolerance are not only morally desirable but also technically advantageous,” said Zenil.

Publication details

Alberto Hernández-Espinosa et al, Neurodivergent influenceability in agentic AI as a contingent solution to the AI alignment problem, PNAS Nexus (2026). DOI: 10.1093/pnasnexus/pgag076

Journal information: PNAS Nexus

Leave a Reply