April 10, 2026 by Santa Fe Institute

Collected at: https://techxplore.com/news/2026-04-simple-baseline-ai-machine.html

In a recent paper, SFI Complexity Postdoctoral Fellow Yuanzhao Zhang and co-author William Gilpin show that a deceptively simple forecasting strategy can outperform several leading machine learning forecasting models.

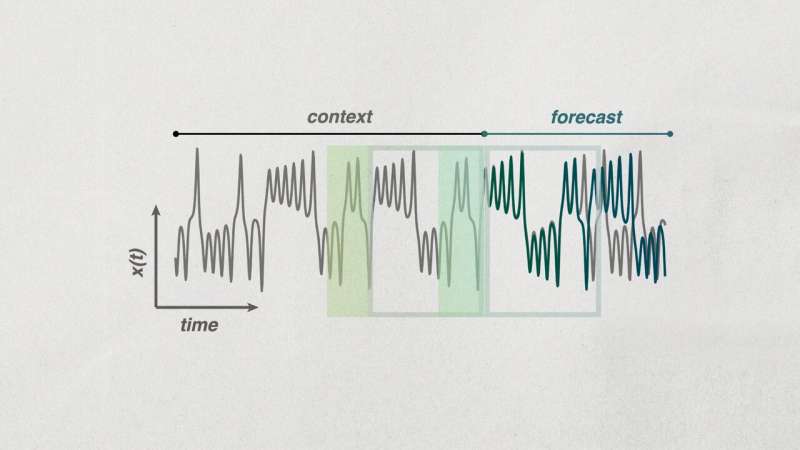

Their method, called context parroting, relies on short stretches of time-series data (or context). As it moves through the time series, it scans for similar patterns or motifs that appeared earlier in the sequence, and uses those patterns to predict what might come next.

Researchers need to be careful when judging the performance and intelligence of AI systems and to keep working to open up the black box of how they make predictions, says Zhang, and this paper is a reminder about why.

The paper’s three main contributions reinforce that message. First, the paper introduces context parroting as a strong and surprisingly effective baseline for zero-shot forecasting, which means predicting a new system from only a short stretch of data and no prior training on that specific system.

Second, it shows that this simple approach can outperform current leading models on difficult prediction tasks such as chaotic systems dynamics. Finally, it explains why forecast accuracy improves as more context is provided, with the rate of improvement linked to the complexity of the system being predicted.

The paper will be presented at the International Conference on Learning Representations in Rio de Janeiro, Brazil, April 23–27. The paper is also published on the arXiv preprint server.

Publication details

Context parroting: A simple but tough-to-beat baseline for foundation models in scientific machine learning. openreview.net/forum?id=EUAXc9Hlvm

Yuanzhao Zhang et al, Context parroting: A simple but tough-to-beat baseline for foundation models in scientific machine learning, arXiv (2025). DOI: 10.48550/arxiv.2505.11349

Journal information: arXiv

Leave a Reply