March 29, 2026 by Paul Arnold, Phys.org

Collected at: https://techxplore.com/news/2026-03-ai-benchmark-robots-chores-real.html

No matter how sophisticated they are, robots can often be indecisive and struggle with multi-step chores in the real world. For example, if you tell a robot to tidy a messy room, it might understand the goal but not know where to grab each object. It could even end up inventing steps. To address these common mistakes, Microsoft and a group of academics have developed an AI benchmark system to improve the accuracy of robot planning. The details of their work are published in a paper on the arXiv preprint server.

The problem for robots is a disconnect between the vision-language model that creates the plan of action in natural language and the low-level motor systems that put these steps into action. While the model can produce a list of tasks, it often fails to give the mechanical system the precise coordinates it needs to touch or move anything.

Teaching the robots

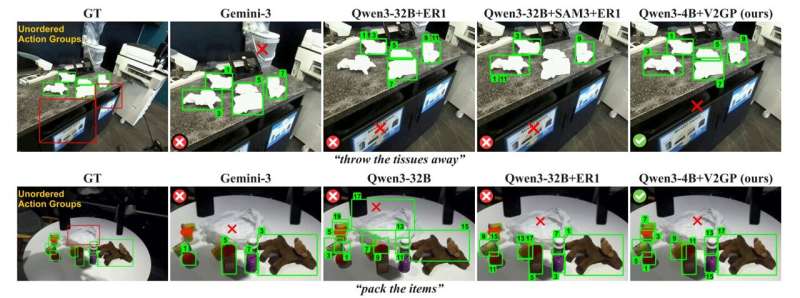

The researchers have created two tools that bridge this disconnect. The first is GroundedPlanBench, a test that used 308 real-world scenarios from the DROID dataset to create a total of 1,009 tasks for the robots to perform. It tests the robots on explicit instructions, such as “pick up the red bowl,” and vague instructions like “tidy the table.”

To help a robot pass the test, the team built a system called video-to-spatially grounded planning (V2GP) that lets AI watch videos of humans or robots performing tasks. It breaks them down into segments by identifying exactly when a hand or robot arm opens and closes to move an object. Then V2GP turns the videos into more than 40,000 lessons to teach AI how to link a verbal command to a physical location.

A new way to train robots?

The team tested several leading AI models (robot brains) on the 1,009 tasks in GroundedPlanBench. They found that while they could write a good list of steps, they were bad at grounding. That is, they couldn’t point to the right spot in a camera image to show the robot exactly where an object was located. “Spatially grounded long-horizon planning remains a major bottleneck for current VLMs,” wrote the researchers in their paper.

However, after training with V2GP, their performance improved significantly. “V2GP provides a promising approach for improving both action planning and spatial grounding performance, validated on our benchmark as well as through real-world robot manipulation experiments.”

The researchers still have work to do to improve their benchmark and V2GP system for robots to handle longer, more complex jobs. But ultimately, they hope for a standardized benchmark rather than individual lab tests to improve how robots perform tasks in the real world.

Publication details

Sehun Jung et al, Spatially Grounded Long-Horizon Task Planning in the Wild, arXiv (2026). DOI: 10.48550/arxiv.2603.13433

On GitHub Pages: groundedplanning.github.io/GroundedPlanning/

Journal information: arXiv

Leave a Reply