March 26, 2026 by Sungkyunkwan University

Collected at: https://techxplore.com/news/2026-03-ai-tech-human-actions-videos.html#goog_rewarded

Typically, AI requires massive amounts of training data to understand complex human actions. However, in real-world scenarios, it is often difficult to secure sufficient video data for specific actions. A research team led by Jae-Pil Heo, Professor in the Department of Software at Sungkyunkwan University, has developed an AI technology that can accurately recognize new actions from only a small number of example videos. The research team focused on few-shot action recognition, which enables AI to learn and distinguish the characteristics of new actions from only a few examples.

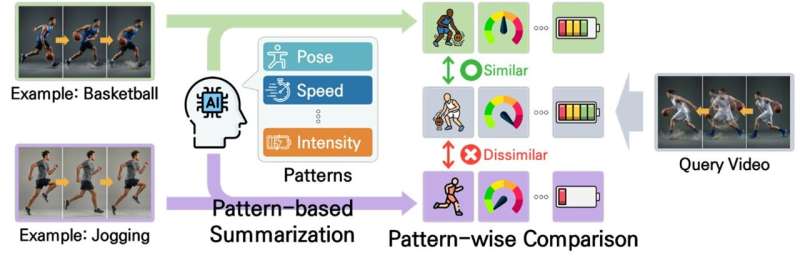

The research team’s core idea is to compare videos by efficiently summarizing only their key movements, rather than relying on conventional complex computations that compare entire videos frame by frame in temporal order. To achieve this, the team extracts and organizes key movement patterns from each video based on several criteria, enabling the AI to compare actions more effectively and identify similarities and differences more accurately.

A key strength of this technology is its robustness to variations in action speed and duration. Even when the same action is performed at different speeds or over different durations due to individual habits or filming conditions, the algorithm can reliably capture the essence of the action and recognize it effectively despite such temporal variations.

This achievement has been internationally recognized for its academic significance and technical excellence. The paper was selected for an oral presentation at the Conference on Computer Vision and Pattern Recognition (CVPR 2025), held in Nashville last June. It is published in the journal 2025 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR).

This technology is expected to play an important role in a wide range of applications that require advanced video understanding, including sports motion analysis, intelligent security systems for detecting dangerous situations, and autonomous behavior learning for robots.

More information

SuBeen Lee et al, Temporal Alignment-Free Video Matching for Few-Shot Action Recognition, 2025 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) (2025). DOI: 10.1109/cvpr52734.2025.00509

Leave a Reply