March 19, 2026 by Jeff Shepard

Collected at: https://www.eeworldonline.com/how-does-the-ieee-magnet-challenge-use-ai-for-power-magnetics-modeling/

The IEEE Power Electronics Society (PELS) Google-Tesla MagNet Challenge is an annual competition. It’s designed to accelerate innovation in magnetic modeling using artificial intelligence (AI). This article reviews some of the highlights from the first two MagNet Challenges in 2023 and 2024.

The first installment ran from February to December 2023, with the winners announced in March 2024. Winners of the 2025 MagNet Challenge will be announced at the 2026 IEEE Applied Power Electronics Conference (APEC) in San Antonio, Texas, in March.

Competitors in the inaugural 2023 MagNet Challenge included 39 undergraduate and graduate teams. The 2023 winners included Paderborn University, Fuzhou University, University of Bristol, University of Sydney, TU Delft, and Mondragon University, with additional recognition for the Indian Institute of Science (IISc).

Teams in the Magnet Challenge use machine learning (ML) models based on neural networks (NNs) trained on a large open-source database provided by the Challenge organizers. The database began with over 500,000 data points in the inaugural competition and has grown to over 2 million data points for 15 different materials.

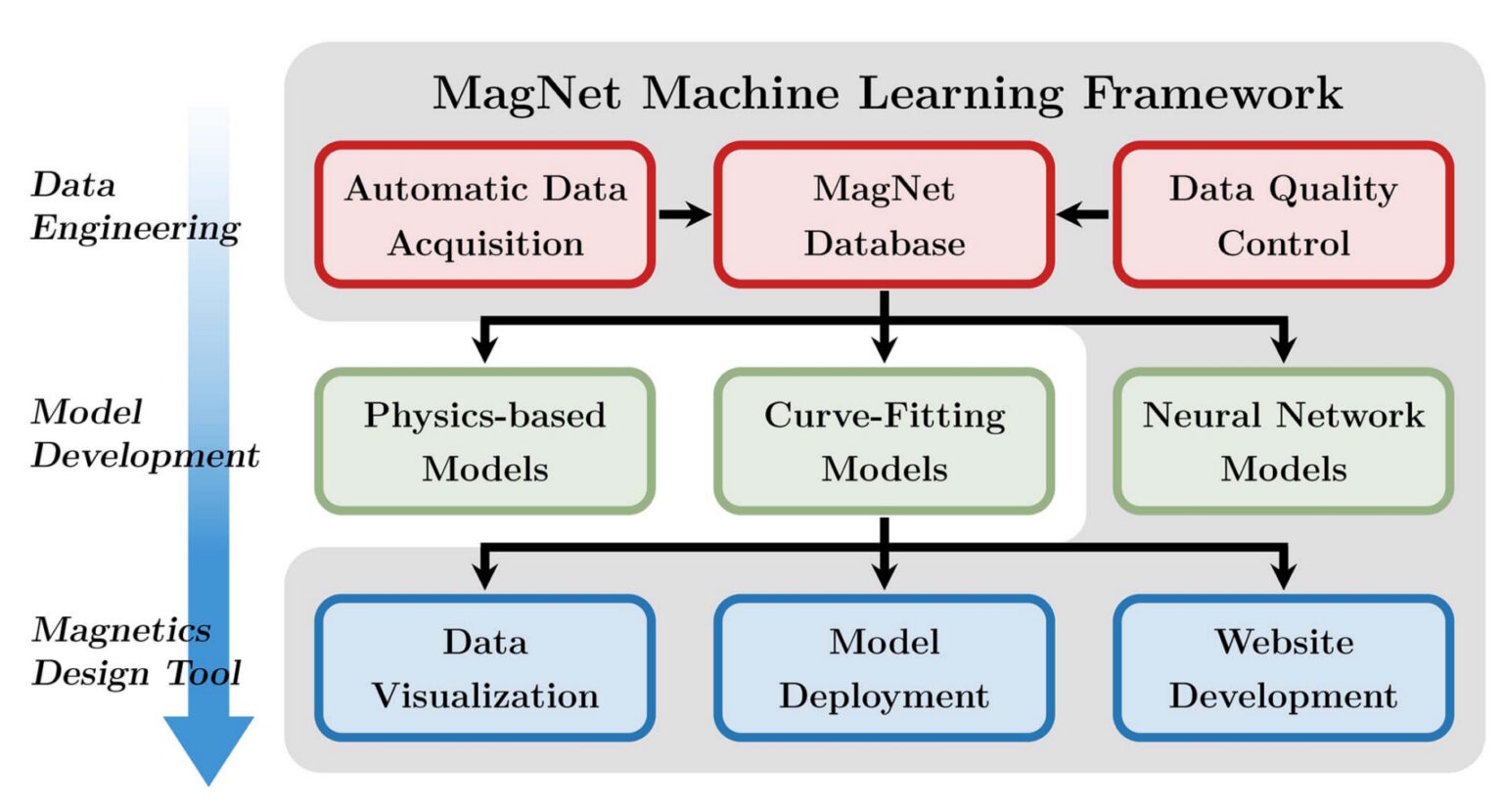

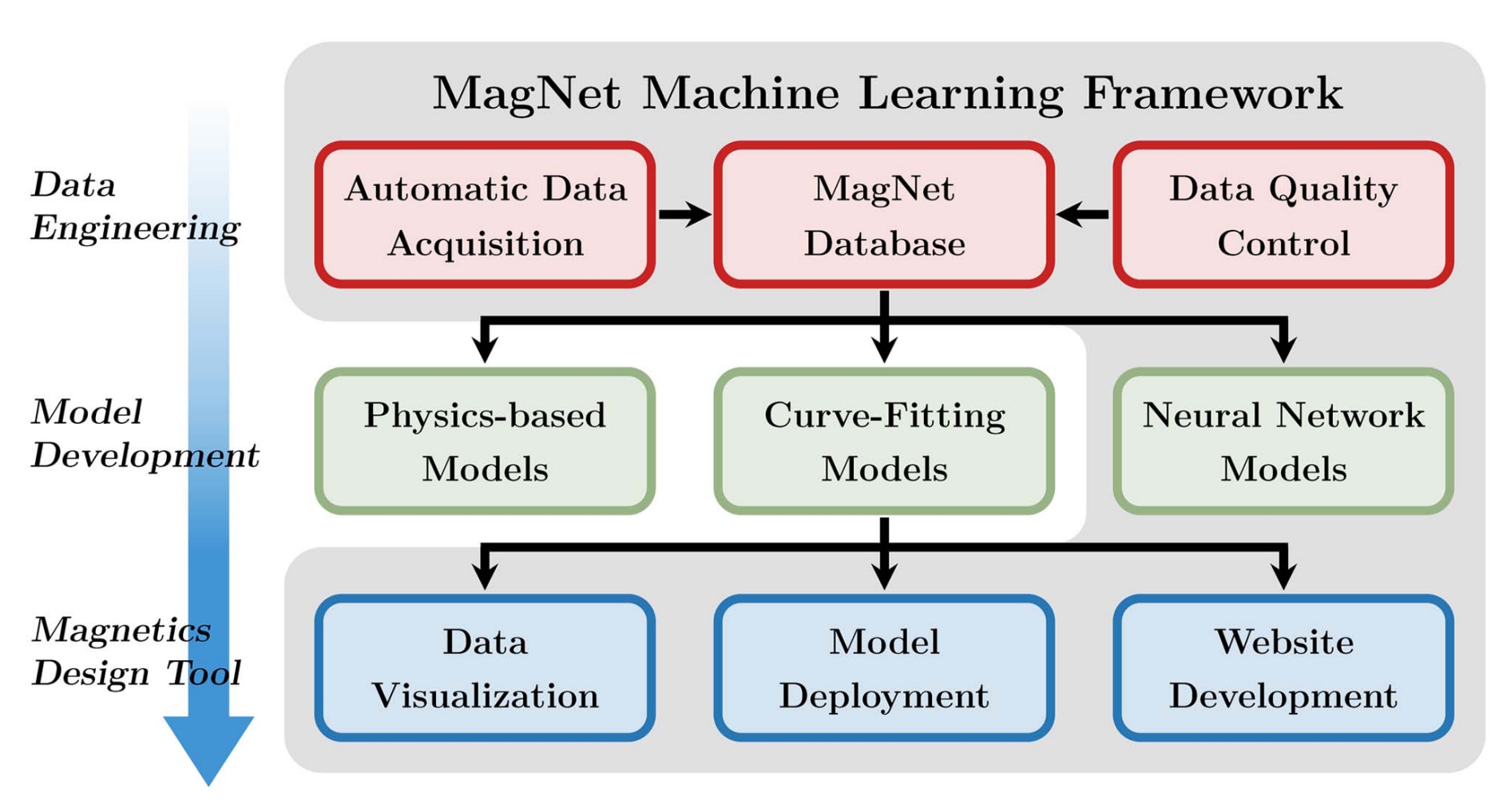

The database, which includes diverse, real-world measurements of voltage, current, and flux density, is designed to allow models to learn non-linear behaviors. Key elements in the Challenge include data engineering, model development, and a magnetic design tool (Figure 1).

Advanced NN architectures

There are as many NN architectures employed as there are MagNet competitors. The smallest models have used as few as 60 parameters, while others have had over 10,000 parameters. The Hard Constrained Neural Architecture Search (HardCoRe-NAS) approach, a type of convolutional NN that was part of the winning solution in 2023, achieved optimal results with 1,755 parameters.

Teams use a variety of advanced NN architectures to try and “best” the competition:

- Long short-term memory (LSTM) networks are used to handle long-term dependencies in magnetic flux data.

- Transformer-based models are used for their high performance in sequence modeling.

- Fourier neural operators (FNO) are applied to map between function spaces.

- Some use Siamese neural networks, which employ two identical weight-sharing subnetworks to compare similar magnetic characteristics.

- Physics-aware AI is used to train models in an unsupervised manner to learn underlying physics, rather than just overfitting to specific data points.

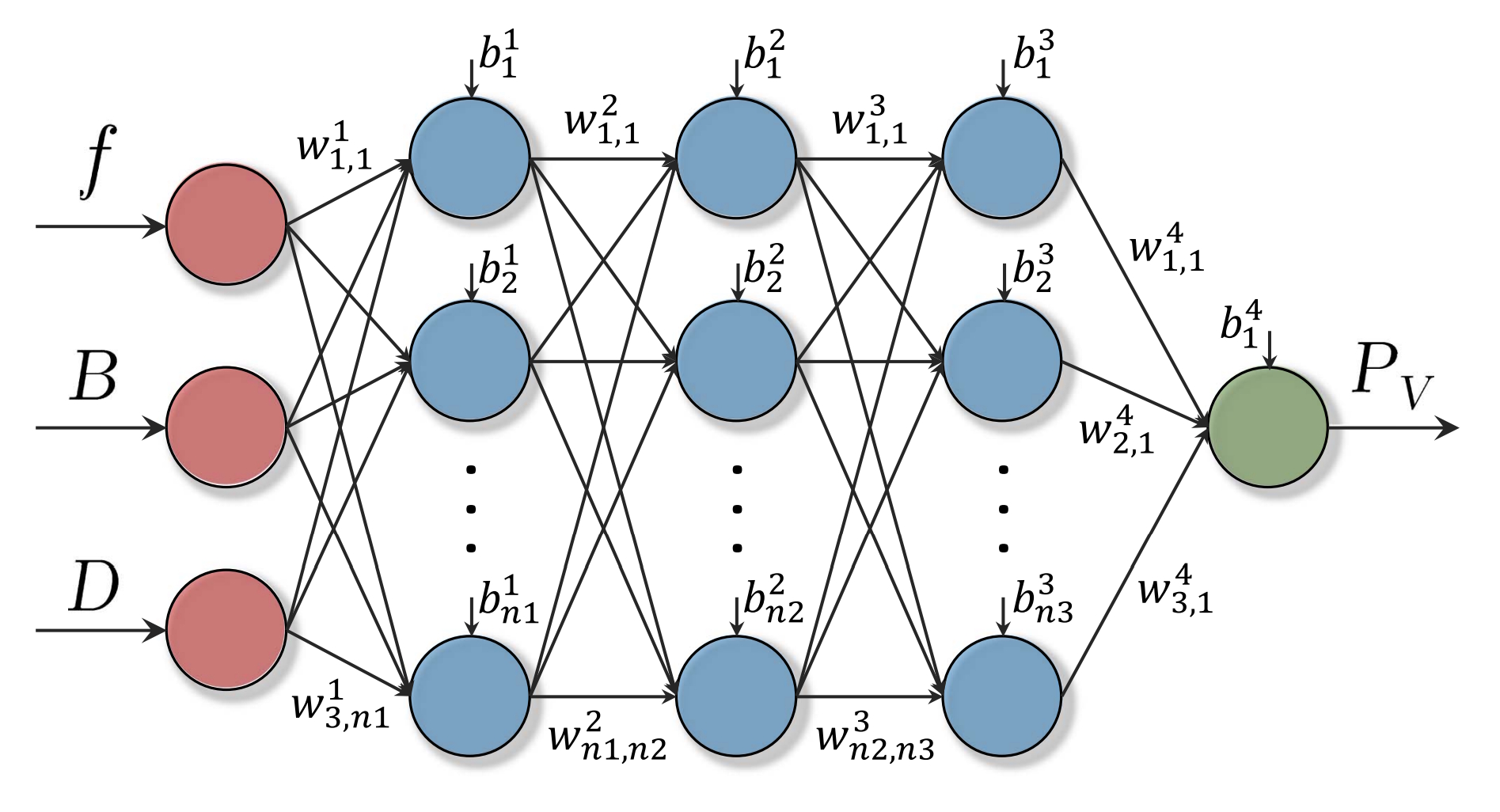

Figure 2 shows the structure of a 4-layer feed-forward NN (FNN) with three inputs (f, B, D) and one output, PV (core loss), used by some of the competitors.

- The excitation frequency is denoted by f.

- B is the magnetic flux density. It can be used to calculate the B-H loop using the magnetic permeability of the material.

- The duty ratio, D, is critical when measuring magnetic parameters, particularly in high-frequency transformer applications, because it directly dictates the magnetization time, which determines whether the material reaches saturation.

For FNNs like that illustrated in Figure 2, the structure and number of neurons in the hidden layers (blue) can be further optimized. Plus, the inputs can be expanded to include other factors like temperature and DC-bias. There is a tradeoff between model size, complexity, and accuracy.

Leveling the field

To provide a level playing field for the competition, the database is generated using a common experimental setup and circuit configuration (Figure 3):

- The system uses a standardized two-winding method for magnetic characterization.

- It includes a common power stage designed to generate arbitrary excitation waveforms. For example, using a power amplifier for sinusoidal excitation and a single-phase bridge to produce pulse-width modulated (PWM) waveforms.

- Automatic data acquisition reduces human error in the measurement process and delivers accurate and consistent data to the competitors.

- Finally, the data is collected under controlled temperature and magnetic material conditions to allow for direct comparison between different data points.

Making the grade

The judges and organizing committee members for the MagNet Challenge vary from year to year. The AI models are expected to correctly simulate complex, high-frequency magnetic losses and hysteresis loops.

Entries are judged on the accuracy and efficiency of ML models designed to predict power magnetic core loss. Evaluation focuses on the 95th percentile error of core loss predictions, model size (number of parameters), and the capability to generalize across different materials and operating conditions.

Summary

The MagNet Challenge is an open-source research initiative designed to advance data-driven, ML-based modeling of power magnetic materials. Its primary purpose is to leverage ML to improve core loss prediction accuracy, reduce design optimization time, and create standardized, open-source NN models. The winners of the third annual MagNet Challenge will be announced at the 2026 IEEE APEC in San Antonio, Texas, in March.

References

DataX – researchers use machine learning to model power magnetic material characteristics in advanced power electronics, Center for Statistics and Machine Learning

How MagNet: Machine Learning Framework for Modeling Power Magnetic Material Characteristics, IEEE

Machine-Learned Models for Power Magnetic Material Characteristics, IEEE

MagNet Challenge for Data-Driven Power Magnetics Modeling, IEEE

Physics meets AI: trading accuracy for speed, Netify

Leave a Reply